MEPAC AI

We have developed software solutions with MEPAC company during our three-year-long project. Our AI-based algorithms adopted high precision, which is standard for the industry. The core of our software were neural networks that could measure the depth of the engraved patterns, segment the viable area to engrave to, and classify good/bad welds in real-time.

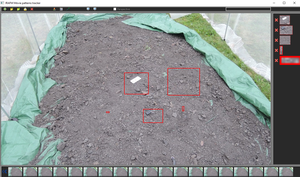

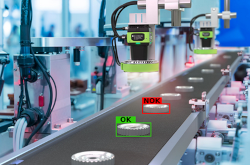

Object detection in industry

We have refined the object detection pipeline to suit the needs of our industrial partner. We had to process the data to unify them carefully. We designed our custom augmentation to battle class imbalance and low count of samples. Our approach reached close to perfect score, tailored to the exact needs of our partner.

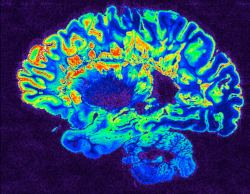

Processing medical data

We have created an AI-based algorithm that helps medical personnel navigate brain scan data. Our solution allows doctors to identify brain scans in a global coordinate system for any patient. Moreover, it recognizes and segments structures of interest. This work was primarily focused on Alzheimer's disease.

Approximating goniophotometer

We have developed an AI-based approach to approximate the goniophotometer measuring process in cooperation with an industrial partner. A goniophotometer is a device that measures the amount of light coming from the light source. Our algorithm creates the same output as the goniophotometer in a fraction of the time using only camera image data.

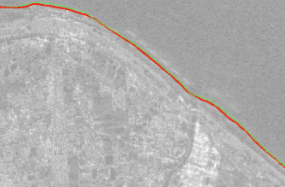

Signate 4th Tellus satellite challenge: coastline detection

The continuous monitoring of a shore plays an essential role in designing strategies for shore protection against erosion. We present our own solution that is able to precisely extract the coastline from SAR image data even if it is not recognizable by a human. Our solution has been validated against the coastline's real GPS location during Signate's competition, where it was runner-up among 109 teams across the whole world.

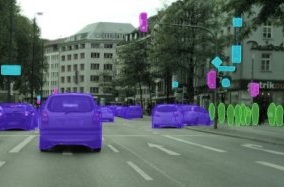

Poly-YOLO

We present a new version of YOLO extended with instance segmentation called Poly-YOLO. In comparison with YOLOv3, Poly-YOLO has only 60% of its trainable parameters but improves mAP by a relative 40%. Poly-YOLO performs instance segmentation using bounding polygons. The network is trained to detect size-independent polygons defined on a polar grid.

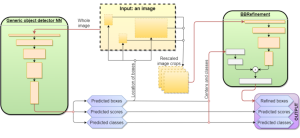

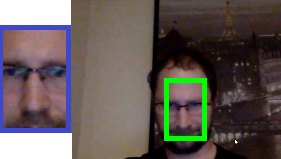

Refinement of bounding boxes predictions

We present a conceptually simple yet powerful and flexible scheme for refining predictions of bounding boxes. Our approach can be built on top of an arbitrary object detector and produces more precise predictions. Due to the transformation of the problem into a domain where BBRefinement does not care about multiscale detection, recognition of the object's class, computing confidence, or multiple detections, the training is much more effective. It results in the ability to refine even COCO's ground truth labels into a more precise form. The process of refinement is fast, able to run in real-time on standard hardware.

Signate 3rd AI Edge Contest

The worldwide competition goal was to create an algorithm able to detect all cars and pedestrians and track them. Our solution, based on our own research, Poly-YOLO, ended as a runner-up.

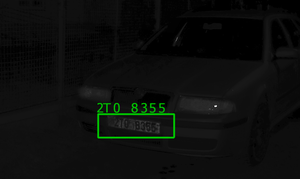

Automatic licence plate recognition

By a car license plate recognition, we mean a software system processing images and providing an alphanumeric transcription of car plates included in an image. We divide the task into four sub-tasks: license plate localization, license plate extraction, characters segmentation and characters recognition. The build of the application has been given to your commerce partner under exclusive license.

Identity tracking

![]()

We focus on visual tracking in night-recorded movies capturing instances of insects. The goal is to realize instance tracking, i.e., emphasize correct matching between identities. We propose our pattern tracking mechanism based on F-transform and implement a user-friendly software to handle the movies.

Signate Tobacco boxes detection and recognition

We refer to Signate 'Tobacco detection and classification' competition, where we finally ended fourth of more than one thousand registered competitors. The competition was divided into two phases, where the first one served as a development phase, i.e., participants were allowed to submit up to three submissions per day to see the score. The second phase, realized after the first one, then determined the final leaderboard using new (unseen) data over the selected submission. In total, the training dataset included approximately 26000 cigarette boxes, which had to be detected in the shelf images and correctly classified. The classification was aimed to link each single cigarette box with one of the 223 predefined classes.

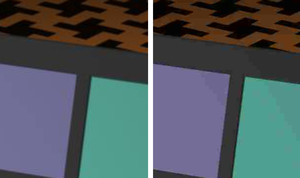

Synthetic Data Generating Framework

Training large Computer Vision models require large annotated datasets of images which may be costly to assemble manually. To avoid this problem, we created a Synthetic Data Generating Framework based on Unreal Engine which can generate million photorealistic images in a day. Together with photorealistic images, our framework can produce detailed automatic annotations with very minimal overhead.

Dragonflies classification

In cooperation with the department of biology, we have developed algorithms for the classification of dragonflies. Correctly monitoring the population of different dragonflies species is vital for evaluating human impact on the environment. We have utilized state of the art neural network approach.

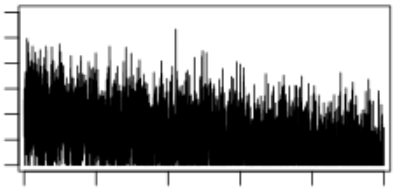

Real-time time series analysis

We propose an efficient algorithm for data classification that is based on the F-transform technique. The algorithm successfully passed all tests, and moreover, it showed the ability to perform classification in an on-line regime.

Night-movie denoising

We present a cascade of filters based on a fuzzy representation of images. This representation captures the uncertainty underlying in the intensity of a pixel by means of a fuzzy set. Our approach provides similar results as standard ones with a significant reduction of the computational time.

Real-time corners detection

The F1-transform is used for an extraction of feature points in edge detection step.

Image plagiarism detection

We propose a method of searching for a plagiarized image in a database based on the technique of F-transform. This technique significantly reduces the domain dimension, and therefore, it speeds up the whole process.

Security camera

The accent is given to security cameras in smart-cities field where we show future possibilities of automatized image processing and behavior analysis systems.

Driver's assistant

Application monitors road in a real time and informs a driver by sound, or graphic alarm. Moreover, drive can be recorded in loop and stored. Accurate speed is displayed. You can choose both kmh or mph units.

Real-time object detection

The work is based on our pattern matching algorithm using F-transform and shows how an arbitrary pattern can be detected in a movie in real-time using low-powered hardware.

Drone autonomous flying

We extend abilities of the Parrot Bebop 2 drone equipped with a front camera by an object tracking application. The drone streams video into a mobile phone where the proposed application processes the movie, tracks the object and automatically controls the drone movement.

Image compression

We propose a new hybrid image compression algorithm which combines the F-transform and JPEG. We show the hybrid algorithm achieves significantly higher decompressed image quality than pure JPEG.